Google Search Console is the most important free SEO tool for understanding how your website performs in Google Search. It gives you direct data from Google about clicks, impressions, indexing, technical issues, and user experience signals. In 2026, with AI-driven search systems and evolving ranking factors, relying on guesswork is no longer enough. You need clear, accurate data straight from the source.

This complete guide explains how to set up, analyze, and use Google Search Console effectively. You will learn how to improve rankings, fix indexing problems, optimize performance reports, and make smarter SEO decisions using real search data.

Introduction to Google Search Console

Google Search Console is a free tool from Google that helps you monitor, maintain, and improve your website’s visibility in Google Search. It shows how your site appears in search results and highlights issues that may stop pages from ranking properly. In 2026, with AI-powered search and evolving algorithms, understanding real search data is critical for growth. Google Search Console gives you direct feedback from Google itself, not guesses from third-party tools.

If you want better rankings, stronger technical SEO, and clear performance tracking, this tool is essential. It helps you fix errors, improve content, and align your site with how Google actually crawls and indexes pages.

What is Google Search Console?

Google Search Console is a free SEO platform provided by Google that tracks your website’s performance in search results. It shows impressions, clicks, rankings, indexing status, and technical errors directly from Google’s database.

This matters because real data helps you make smarter SEO decisions. Instead of relying on estimates, you see exactly how Google views your pages. In 2026, where AI search favors structured and optimized content, having this visibility is a competitive advantage.

Using Google Search Console, you can monitor traffic trends, fix indexing issues, and improve keyword targeting. It is the foundation of any serious SEO strategy.

How does Google Search Console work?

Google Search Console works by collecting data from Google’s crawl, index, and ranking systems and presenting it inside your account dashboard. Once you verify ownership of your website, Google begins reporting how your pages perform in search.

It tracks which queries trigger your pages, how often users click, and whether Google can properly crawl and index your content. This is important in 2026 because technical errors, crawl inefficiencies, and structured data gaps directly affect AI-driven search visibility.

By reviewing reports regularly, you can identify ranking drops, indexing problems, and page experience issues. This allows you to fix problems before they hurt traffic and revenue.

What data does Google Search Console show?

Google Search Console shows performance data, indexing status, page experience signals, and technical error reports. The Performance report displays clicks, impressions, average position, and click-through rate for each query and page.

It also shows which pages are indexed, which are excluded, and why. You can monitor Core Web Vitals, mobile usability, structured data errors, and security issues. In 2026, this data is essential because AI search systems prioritize technically clean, well-structured websites.

With this information, you can optimize low-CTR keywords, fix crawl errors, and strengthen content visibility. It turns search data into clear, actionable improvements.

Who should use Google Search Console?

Google Search Console should be used by website owners, SEO specialists, marketers, developers, and content creators. Anyone responsible for organic traffic or website performance benefits from it.

For businesses in 2026, relying only on analytics tools is not enough. You need direct feedback from Google to stay competitive in AI-powered search environments. Developers use it to fix crawl and indexing issues. Marketers use it to improve keyword performance. Content teams use it to refine strategy.

If your website depends on search traffic for leads, sales, or brand visibility, Google Search Console is not optional—it is essential.

Why Google Search Console Matters for SEO

Google Search Console matters for SEO because it gives you direct insight into how Google views, crawls, and ranks your website. It removes guesswork and replaces it with real performance data from Google itself. In 2026, where AI-driven search systems reward technically clean and well-structured sites, this visibility is critical.

Without Google Search Console, you are optimizing blindly. With it, you can detect ranking drops, fix crawl errors, and improve underperforming pages before traffic declines further. It connects technical SEO, content strategy, and performance tracking in one place.

For any serious SEO strategy, Google Search Console is not just helpful—it is foundational.

How does GSC help SEO?

Google Search Console helps SEO by showing which keywords drive impressions, which pages get clicks, and where ranking opportunities exist. It highlights pages with high impressions but low click-through rates, helping you optimize titles and meta descriptions.

This matters in 2026 because AI-enhanced search prioritizes relevance and engagement signals. If users do not click your result, visibility drops over time. GSC shows you exactly where that gap exists.

It also identifies technical problems like mobile usability errors and structured data issues. Fixing these improves crawl efficiency and strengthens overall site authority.

Can Google Search Console improve rankings?

Google Search Console does not directly increase rankings, but it helps you make changes that improve them. It provides data-driven insights so you can optimize content, fix errors, and strengthen weak pages.

In 2026, ranking improvements depend on precision. Small technical issues, poor CTR, or indexing delays can limit growth. GSC highlights these weaknesses clearly.

By optimizing pages with high impressions but low positions, updating content based on query data, and resolving technical errors, you create measurable ranking gains. The tool itself does not rank pages—but the actions you take from its data absolutely can.

How does GSC help with indexing and crawling?

Google Search Console helps with indexing and crawling by showing which pages are indexed, excluded, or facing errors. The Page Indexing report explains why pages are not appearing in search results.

This is critical in 2026 because crawl efficiency impacts visibility in AI-powered search systems. If Google cannot crawl or index your pages properly, they cannot rank.

You can submit sitemaps, request indexing, and monitor crawl issues directly in GSC. Fixing coverage errors, redirect problems, and blocked resources ensures Google understands your site structure clearly. Better crawling leads to better indexation—and stronger search performance.

Setting Up Google Search Console

Setting up Google Search Console correctly ensures Google can collect accurate data from your website. The process starts with adding your website as a property and verifying ownership. In 2026, proper setup is critical because AI-driven search systems rely on complete crawl and indexing signals. If your property is misconfigured, you may miss important performance data or indexing alerts.

A clean setup ensures full visibility across subdomains, protocols, and URLs. It also allows you to submit sitemaps, monitor indexing, and track real keyword data from day one.

Correct configuration at the start prevents reporting gaps and technical blind spots later.

Domain Property vs URL Prefix — what’s the difference?

A Domain Property tracks all URLs across every subdomain and protocol, while a URL Prefix property tracks only the exact URL entered. Domain Property includes HTTP, HTTPS, www, non-www, and all subdomains automatically.

This matters in 2026 because websites often use multiple subdomains and secure protocols. If you only track a URL Prefix, you may miss important data.

Domain Property requires DNS verification but gives complete coverage. URL Prefix is easier to verify but limited in scope. For full SEO visibility, Domain Property is usually the better choice.

How do you verify your property in GSC?

You verify your property by proving ownership of the website using one of Google’s approved methods. Verification ensures that only authorized users can access performance data.

This step matters because without verification, Google will not share search data. Once verified, reporting begins immediately.

Choose the method that matches your access level. DNS works best for Domain Properties, while HTML tag or tracking-based methods work well for URL Prefix properties.

DNS verification explained

DNS verification requires adding a TXT record to your domain’s DNS settings. Google checks this record to confirm ownership.

This is the most secure and recommended method for Domain Properties in 2026. It covers all subdomains and protocols automatically.

After adding the TXT record, allow time for DNS propagation, then click verify inside GSC.

HTML tag method

The HTML tag method requires adding a meta tag inside your website’s <head> section. Google checks for this tag to confirm ownership.

This method is simple and works well if you control the website code. However, removing the tag later can break verification.

It is best suited for URL Prefix properties when DNS access is not available.

Google Analytics property verification

Google Analytics verification works if the same Google account has edit access to the Analytics property installed on the site.

Google checks the tracking code to confirm ownership. This method is quick and does not require code edits if Analytics is already active.

It works only for URL Prefix properties and requires proper user permissions.

Google Tag Manager verification

Google Tag Manager verification works if the GTM container snippet is installed and you have publish permissions.

Google checks the container code to confirm site ownership. This method is convenient for marketing teams managing tags.

Like Analytics verification, it applies to URL Prefix properties only.

Common verification errors and how to fix them

Common verification errors include incorrect DNS records, delayed DNS propagation, missing HTML tags, or insufficient account permissions.

In 2026, most issues happen due to copying the wrong TXT value or placing it in the wrong DNS field. Always double-check the exact record type and value.

If using HTML tag verification, ensure the tag is placed inside the <head> section and not removed after publishing. For Analytics or GTM methods, confirm you have full edit or publish rights.

What to configure right after verification

Right after verification, submit your XML sitemap and check the Page Indexing report. This ensures Google starts crawling important URLs quickly.

Next, review manual actions, security issues, and Core Web Vitals. Early detection prevents ranking problems later.

Finally, connect Google Search Console with Analytics for deeper insights. In 2026, combining performance data with user behavior metrics gives a stronger SEO advantage.

Google Search Console Dashboard Explained

The Google Search Console dashboard gives you a quick overview of your website’s search performance, indexing status, and technical health. It acts as the control center where you monitor how Google views your site. In 2026, with AI-driven search systems relying heavily on structured and clean data, this dashboard becomes even more important.

From one screen, you can detect traffic drops, indexing errors, and experience issues before they impact rankings. It helps you prioritize fixes based on real search data instead of assumptions.

Understanding each section of the dashboard allows you to move from reactive SEO to proactive optimization.

What does the Performance summary show?

The Performance summary shows clicks, impressions, average position, and click-through rate (CTR) from Google Search. It gives a high-level snapshot of how your site performs in search results.

This matters in 2026 because visibility alone is not enough. High impressions with low clicks may signal weak titles or poor relevance. AI-enhanced results reward engagement signals.

By reviewing trends regularly, you can identify ranking drops, seasonal shifts, and keyword opportunities. It helps you optimize pages that already have visibility but need stronger performance.

What is the Indexing summary?

The Indexing summary shows how many pages are indexed and how many are excluded. It highlights errors, warnings, and reasons why pages may not appear in search results.

This is critical because pages that are not indexed cannot rank. In 2026, crawl efficiency directly impacts search visibility in AI-powered systems.

The report helps you detect issues like “Crawled – currently not indexed,” redirect errors, or blocked pages. Fixing these problems improves index coverage and strengthens overall search presence.

What is the Experience overview?

The Experience overview shows Core Web Vitals, mobile usability, and HTTPS status. It focuses on how users experience your website.

This matters because Google prioritizes fast, mobile-friendly, and secure websites. Poor page experience can reduce ranking potential even if content is strong.

Monitoring this section helps you detect slow pages, layout shifts, and mobile errors. Improving these signals enhances user satisfaction and supports long-term SEO stability.

What do Manual Actions and Security Issues sections show?

The Manual Actions section shows whether Google has applied penalties due to guideline violations. The Security Issues section highlights malware, hacked content, or unsafe pages.

These reports are essential because penalties or security warnings can remove pages from search results completely. In 2026, trust signals are even more important for visibility.

Checking these sections ensures your site complies with Google’s policies and remains safe for users. Quick action protects rankings, traffic, and brand credibility.

Performance Report (Core Feature)

The Performance report in Google Search Console shows exactly how your website performs in Google Search. It displays real search data including clicks, impressions, CTR, and average position. This is the most important report for SEO because it connects visibility with user behavior. In 2026, where AI-powered results prioritize engagement and intent alignment, understanding this data is critical.

Instead of guessing which keywords work, you see which queries trigger your pages and how users respond. It helps you find ranking opportunities, content gaps, and low-CTR pages that need optimization.

If used correctly, the Performance report becomes your primary decision-making tool for traffic growth.

What are Clicks, Impressions, CTR, and Position?

Clicks are the number of times users clicked your website from search results. Impressions show how often your page appeared in search results. CTR (click-through rate) is the percentage of impressions that resulted in clicks. Average position shows your typical ranking for a query.

These metrics matter because they reveal both visibility and engagement. In 2026, high impressions with low CTR may signal weak titles or mismatched intent.

Tracking these together helps you identify pages that rank but do not attract clicks, and pages that need ranking improvements to drive more traffic.

How do you filter by:

You can filter the Performance report to analyze specific segments of your search data. Filtering allows deeper SEO insights instead of viewing broad averages.

This matters in 2026 because SEO decisions must be precise. Broad data hides opportunities and problems. Filters help you isolate exactly where growth or decline is happening.

Queries

Filter by queries to see which keywords trigger your pages. This helps identify ranking gaps and content opportunities.

Pages

Filter by pages to analyze individual URL performance. You can see which pages need optimization or updates.

Countries

Filter by country to understand geographic performance. This is useful for international SEO strategy.

Devices

Filter by device to compare desktop vs mobile performance. Mobile-first indexing makes this especially important.

Search appearance

Filter by search appearance to analyze results like rich snippets or product listings. This helps optimize structured data.

Date range comparison

Compare date ranges to detect growth, traffic drops, or algorithm impact. This supports trend analysis and forecasting.

How to use Performance report for SEO insights?

Use the Performance report to find high-impression keywords with low CTR and optimize titles and meta descriptions. Identify queries ranking between positions 8–20 and improve content to push them onto page one.

In 2026, small ranking shifts can significantly impact traffic due to AI-enhanced search layouts. Precision optimization is key.

Regular analysis helps you update underperforming pages, strengthen internal linking, and refine keyword targeting. The Performance report turns raw search data into clear, actionable SEO improvements.

URL Inspection Tool

The URL Inspection Tool in Google Search Console lets you check how Google sees a specific page on your website. It shows indexing status, crawl details, canonical selection, and any detected issues. This tool is essential in 2026 because AI-driven search systems rely on accurate indexing and clean page signals.

Instead of guessing why a page is not ranking, you can see whether Google crawled it, indexed it, or encountered problems. It gives direct feedback from Google’s index.

Using this tool regularly helps you diagnose visibility issues quickly and take corrective action before traffic is affected.

What information does URL Inspection provide?

The URL Inspection Tool provides indexing status, last crawl date, crawl type, canonical URL selection, and enhancement data such as mobile usability or structured data.

This matters because ranking issues often come from indexing or canonical problems, not just content weaknesses. In 2026, technical clarity strongly influences AI search visibility.

You can also see whether the page is eligible for rich results and whether any errors are blocking enhancements. This makes it a powerful troubleshooting tool for technical SEO.

How to check if a page is indexed

To check if a page is indexed, paste the full URL into the URL Inspection search bar. Google will show whether the page is in the index.

If the page is indexed, it will confirm eligibility for search results. If not, it will explain the reason for exclusion.

This is important because non-indexed pages cannot rank. Checking indexing status ensures your important content is actually visible in Google Search.

What does “Crawled — currently not indexed” mean?

“Crawled — currently not indexed” means Google has visited the page but decided not to add it to the index.

This usually happens due to low content quality, duplication, weak internal linking, or unclear value. In 2026, AI-based evaluation systems are more selective about indexing thin or redundant pages.

To fix this, improve content depth, strengthen internal links, and ensure the page provides clear search intent value. Then request reindexing after updates.

How to request indexing

To request indexing, inspect the URL and click “Request Indexing.” Google will re-queue the page for crawling.

This is useful after publishing new content or fixing technical errors. However, it does not guarantee immediate indexing.

In 2026, Google prioritizes quality and authority. Use indexing requests strategically after meaningful updates, not repeatedly without improvements.

Page Indexing & Coverage Report

The Page Indexing & Coverage report shows which pages on your website are indexed, which are not, and why. It helps you understand how Google processes your URLs after crawling them. In 2026, with AI-driven search systems prioritizing quality and technical clarity, index management is more important than ever.

If key pages are not indexed, they cannot rank or generate traffic. This report highlights errors, exclusions, and crawl problems so you can fix them quickly.

Monitoring this section regularly ensures your most valuable pages are discoverable, properly indexed, and eligible for search visibility.

What does the Coverage report show?

The Coverage report shows the total number of indexed pages and details about pages that are excluded or have errors. It explains why Google did or did not index each URL.

This matters because indexing is the foundation of SEO. Without it, rankings are impossible. In 2026, Google is stricter about indexing low-value or duplicate pages.

The report helps you detect technical barriers, content quality issues, and structural problems that limit search visibility.

Errors vs Valid pages

Errors are serious issues preventing pages from being indexed, such as server errors or blocked resources. Valid pages are successfully indexed and eligible to appear in search.

This distinction is important because errors require immediate attention, while valid pages confirm healthy indexing.

Tracking this balance ensures your site maintains strong index coverage and avoids hidden technical losses.

What are Warnings?

Warnings indicate potential issues that do not fully block indexing but may affect performance.

These may include pages indexed with minor concerns or redirect-related signals. In 2026, even small technical weaknesses can reduce AI search visibility.

Review warnings carefully to prevent them from turning into larger indexing problems.

What is Excluded?

Excluded pages are URLs Google chose not to index. This may happen due to noindex tags, duplicate content, canonicalization, redirects, or low-value pages.

Not all exclusions are bad. Some are intentional, like filtered pages or thank-you pages.

However, accidental exclusions can limit traffic. Regular review ensures important content is not mistakenly excluded.

What are common indexing issues?

Common indexing issues include “Crawled — currently not indexed,” duplicate pages without proper canonical tags, soft 404 errors, and blocked resources in robots.txt.

In 2026, content quality and internal linking strongly influence index decisions. Thin or poorly connected pages are often skipped.

Identifying these patterns helps improve crawl efficiency and content visibility.

How to fix common crawl errors?

Fix crawl errors by resolving server issues, correcting redirect chains, updating broken internal links, and improving page quality.

Ensure your sitemap includes only valuable, indexable URLs. Strengthen internal linking to signal importance.

After fixing issues, validate them inside Google Search Console. This confirms improvements and supports faster reprocessing.

Sitemaps in Google Search Console

Sitemaps in Google Search Console help Google discover and prioritize important pages on your website. Submitting a sitemap ensures that search engines understand your site structure clearly. In 2026, where AI-driven search systems evaluate crawl efficiency and content hierarchy, a clean sitemap improves visibility.

A sitemap does not guarantee rankings, but it improves crawl guidance. It tells Google which pages matter most and when they were last updated.

For large websites, eCommerce stores, or frequently updated blogs, submitting and monitoring sitemaps inside Google Search Console is essential for maintaining strong index coverage.

What is an XML sitemap?

An XML sitemap is a file that lists important URLs on your website in a structured format. It helps search engines discover and crawl pages efficiently.

This matters because Google may not find all pages through internal links alone, especially on large or complex sites. In 2026, structured crawl signals support better indexing decisions.

A proper XML sitemap includes only indexable, high-value URLs and avoids redirects, duplicates, or noindex pages.

How to submit a sitemap in GSC

To submit a sitemap, go to the Sitemaps section in Google Search Console and enter the sitemap URL (for example, yoursite.com/sitemap.xml). Then click submit.

This action signals Google to review the listed URLs for crawling and indexing. It does not force indexing but improves discovery efficiency.

After submission, Google will process the file and display status updates inside the dashboard.

How to read the sitemap status

The sitemap status shows whether the file was successfully fetched and how many URLs were discovered. It may display “Success,” “Has errors,” or “Could not fetch.”

This information matters because fetch errors or mismatched URL counts can signal technical problems.

Review the number of discovered URLs and compare it with indexed pages in the Coverage report to ensure proper alignment.

What sitemap errors mean and how to fix them

Common sitemap errors include incorrect URL formats, non-indexable pages, server errors, or blocked URLs.

In 2026, submitting low-quality or duplicate URLs can reduce crawl efficiency. Always include only canonical, indexable pages.

Fix errors by correcting invalid URLs, removing redirects or noindex pages from the sitemap, and ensuring the file is accessible. After updates, resubmit the sitemap for validation.

Experience & Page Experience Signals

Experience and Page Experience signals in Google Search Console measure how users experience your website in real-world conditions. These reports focus on speed, stability, mobile usability, and security. In 2026, Google’s AI-driven ranking systems heavily consider user experience signals because they reflect real satisfaction, not just keywords.

If your pages are slow, unstable, or difficult to use on mobile, rankings can suffer even if content is strong. The Experience section helps you identify performance gaps that directly impact visibility.

Improving these signals increases user trust, reduces bounce rates, and strengthens long-term SEO stability.

What is Core Web Vitals in Google Search Console?

Core Web Vitals are performance metrics that measure loading speed, interactivity, and visual stability. They are based on real user data collected from Chrome users.

These metrics matter because Google uses them as ranking signals. In 2026, fast and stable pages are prioritized in competitive search results.

Improving Core Web Vitals enhances both SEO and user satisfaction, leading to stronger engagement and higher conversion potential.

Largest Contentful Paint (LCP)

Largest Contentful Paint measures how long it takes for the main content of a page to load.

A good LCP score means users see meaningful content quickly. Slow LCP can reduce rankings and increase bounce rates.

Improving server speed, optimizing images, and using efficient hosting can lower LCP time and improve performance.

First Input Delay (FID) / Interaction to Next Paint (INP)

FID measured initial responsiveness, but INP now evaluates overall interaction responsiveness throughout the page lifecycle.

In 2026, INP is the primary responsiveness metric. Slow interaction times harm user experience and SEO signals.

Reducing heavy JavaScript and improving script execution speed helps improve responsiveness.

Cumulative Layout Shift (CLS)

Cumulative Layout Shift measures unexpected layout movements while a page loads.

High CLS frustrates users because elements shift suddenly, causing misclicks.

Reserving space for images and ads and stabilizing dynamic content reduces CLS and improves user trust.

HTTPS and Mobile Usability reports

The HTTPS report confirms whether your pages are served securely. Secure connections are essential for user trust and ranking eligibility.

The Mobile Usability report identifies issues affecting smartphone users. Since Google uses mobile-first indexing, mobile errors directly impact rankings.

Fixing insecure pages and mobile layout issues ensures full search eligibility in 2026’s mobile-dominant landscape.

How Page Experience impacts SEO

Page Experience impacts SEO by influencing ranking potential and user engagement signals.

While content relevance remains primary, poor experience metrics can limit competitive rankings. In 2026, AI systems evaluate both content quality and user satisfaction.

Optimizing speed, stability, and mobile usability strengthens your ranking foundation and supports long-term organic growth.

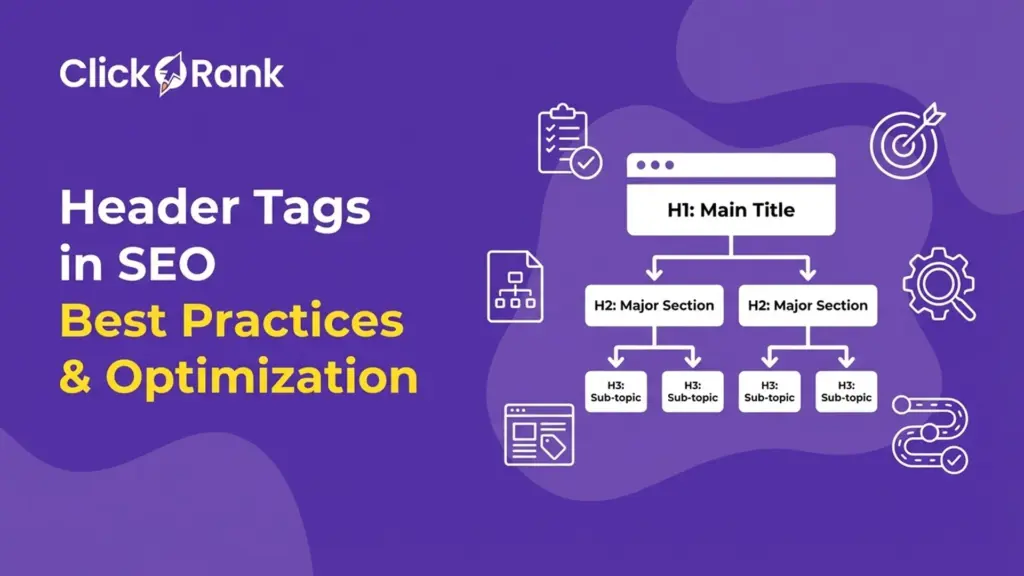

nhancement Reports (Structured Data)

Enhancement Reports in Google Search Console show how your structured data is performing and whether your pages qualify for rich results. These reports highlight detected schema types, errors, and valid items. In 2026, structured data plays a major role in AI-driven search and generative results. Clear entity signals and structured markup improve visibility beyond traditional blue links.

If your schema contains errors, your pages may lose eligibility for rich snippets like FAQs or product ratings. Monitoring Enhancement Reports ensures your markup stays valid and optimized.

Strong structured data improves click-through rates, enhances SERP appearance, and supports entity-based indexing in modern search.

What enhancement types are shown?

Enhancement types shown depend on the structured data detected on your site. Common types include Breadcrumbs, FAQ, How-to, Products, and Review snippets.

Breadcrumbs improve navigation clarity in search results. FAQ schema can display expandable answers. How-to markup supports instructional content. Product schema enables pricing and availability display. Review snippets show ratings and trust signals.

In 2026, rich results increase visibility and engagement. Proper schema implementation helps your content stand out in competitive AI-enhanced search layouts.

What do enhancement errors mean?

Enhancement errors mean Google detected issues in your structured data markup. These errors prevent your pages from qualifying for rich results.

Errors may include missing required fields, invalid values, or incorrect schema formatting. In 2026, invalid structured data weakens entity clarity and reduces SERP enhancements.

Warnings may still allow eligibility but indicate incomplete markup. Regular monitoring prevents loss of rich result visibility.

How to fix structured data issues?

Fix structured data issues by reviewing error details in the Enhancement report and correcting the markup on affected pages.

Ensure all required properties are present and formatted correctly according to schema guidelines. Use proper JSON-LD structure and validate changes before publishing.

After fixing issues, use the “Validate Fix” option in Google Search Console. This confirms corrections and restores eligibility for enhanced search features.

Links Report (Backlinks + Internal Links)

The Links Report in Google Search Console shows which websites link to you and how your internal pages connect to each other. It provides direct data from Google about your link structure. In 2026, link signals still influence authority, trust, and crawl efficiency, especially in AI-enhanced search systems.

External links help Google measure credibility. Internal links help Google understand content hierarchy and page importance.

Monitoring this report allows you to strengthen weak pages, improve topical clusters, and identify authority-building opportunities. It turns link data into clear SEO action steps.

How does GSC show external links?

Google Search Console shows external links by listing top linking sites, top linked pages, and anchor text data.

This matters because backlinks signal authority and relevance. Pages with more high-quality external links often rank better in competitive niches.

You can review which pages attract the most links and replicate their structure or content strategy. It also helps detect unusual or spam-like link patterns that may require review.

How does GSC show internal link count?

The Links Report shows internal links by listing pages ordered by how many internal links point to them.

This is important because internal linking signals page priority and improves crawl efficiency. In 2026, strong internal structure supports entity clarity and AI indexing.

If key pages have low internal link counts, they may struggle to rank. Strengthening internal connections improves visibility and distributes authority effectively.

How to use the links report to improve SEO

Use the Links Report to identify high-authority pages and add internal links from them to weaker, strategic pages.

Analyze top-linked content to understand why it earns backlinks, then create similar high-value resources.

In 2026, structured internal linking and strong backlink profiles remain essential ranking factors. Regularly reviewing this report helps you strengthen authority, improve crawl flow, and boost overall search performance.

Manual Actions & Security Issues

Manual Actions & Security Issues in Google Search Console show whether your website has been penalized or compromised. These reports protect your visibility by alerting you to serious problems that can remove pages from search results. In 2026, with stronger spam detection and AI-based quality review systems, compliance and site security are critical.

A manual action can reduce rankings or fully deindex pages. Security issues like malware warnings can block users from visiting your site.

Monitoring this section regularly helps protect traffic, trust, and long-term SEO stability.

What are Manual Actions?

Manual Actions are penalties applied by Google’s human reviewers when a site violates search guidelines.

Common reasons include spam content, unnatural backlinks, cloaking, or thin affiliate pages. These actions directly affect rankings or indexation.

In 2026, AI systems flag suspicious patterns faster, but manual reviews still enforce policy violations. A manual action requires correction before rankings recover.

How to view why a Manual Action was applied

You can view the reason for a Manual Action inside the Manual Actions report in Google Search Console.

The report explains the specific violation and whether it affects the entire site or specific pages.

This clarity matters because fixing the wrong issue wastes time. Always review the exact reason before taking corrective action.

How to fix Manual Actions

Fix Manual Actions by resolving the guideline violation mentioned in the report.

Remove spam content, disavow unnatural backlinks if necessary, correct misleading markup, and improve content quality.

After fixing issues, submit a reconsideration request explaining the changes made. Transparent correction increases the chance of penalty removal.

What are Security Issues in GSC?

Security Issues in Google Search Console alert you if your site contains hacked content, malware, deceptive pages, or unsafe downloads.

These issues can trigger warning labels in search results, damaging trust and traffic.

In 2026, security is tightly linked to ranking stability and user safety signals.

How to fix hacked content or malware issues

Fix hacked content or malware issues by cleaning infected files, removing malicious code, and updating vulnerable plugins or software.

Change passwords, strengthen server security, and scan your site thoroughly.

After resolving the issue, request a security review inside Google Search Console. This helps remove warnings and restore search visibility.

Advanced SEO Use Cases (Actual GSC Use)

Advanced SEO use cases in Google Search Console focus on turning raw data into strategic growth decisions. Instead of just monitoring clicks and impressions, you use the data to identify opportunities, weaknesses, and competitive gaps. In 2026, where AI search systems reward precision and intent alignment, surface-level analysis is not enough.

Google Search Console allows you to detect ranking friction, engagement gaps, and geographic or device-based performance differences.

When used correctly, it becomes a forecasting and optimization engine. It helps you prioritize updates that generate measurable traffic gains instead of random content changes.

How to analyse query performance for optimisation

To analyze query performance, open the Performance report and filter by queries. Sort by impressions to find keywords where your site appears frequently but ranks outside the top positions.

This matters because high-impression queries signal demand. In 2026, moving from position 12 to position 7 can significantly increase traffic due to AI-enhanced result layouts.

Focus on optimizing content depth, improving search intent match, and refining internal linking for those queries.

How to flag underperforming pages

Flag underperforming pages by filtering by pages and comparing clicks, impressions, and average position.

Pages with high impressions but low CTR may need title and meta description improvements. Pages with declining impressions may require content refreshes.

Regularly identifying weak pages helps prevent gradual traffic decay and supports continuous SEO growth.

How to find variation in clicks vs impressions

Compare clicks and impressions to detect performance imbalance.

If impressions are high but clicks are low, your snippet may not be compelling or aligned with intent. If clicks are strong but impressions are limited, ranking improvements are needed.

In 2026, optimizing CTR is as important as improving rankings because engagement signals influence visibility.

How to compare performance by device or country

Use filters to compare device performance (mobile vs desktop) or analyze country-specific traffic.

Mobile-first indexing makes device comparison critical. Weak mobile metrics can limit rankings. Country filters help refine international SEO strategy.

Segmenting data ensures your optimization strategy matches real audience behavior, not broad averages.

Weekly and Monthly GSC Review Checklist

A weekly and monthly Google Search Console review ensures your SEO strategy stays proactive instead of reactive. Regular monitoring helps you detect traffic drops, indexing problems, and performance shifts before they become major losses. In 2026, with AI-driven search volatility and faster algorithm adjustments, consistent review cycles are essential.

Google Search Console provides live feedback from Google’s systems. Ignoring it for weeks can mean missed growth opportunities or unnoticed technical errors.

A structured checklist keeps your optimization focused, measurable, and aligned with real search data.

What to check every week in GSC

Every week, review the Performance report for sudden drops in clicks or impressions. Compare the last 7 days with the previous period to detect unusual changes.

Check the Page Indexing report for new errors or spikes in excluded pages. Monitor Manual Actions and Security Issues to ensure compliance.

Weekly checks help you react quickly to technical issues, ranking declines, or crawl disruptions before traffic is heavily impacted.

What to analyse every month

Every month, analyze long-term trends in clicks, CTR, and average position. Identify pages ranking between positions 8–20 and prioritize optimization.

Review Core Web Vitals and Experience reports to detect performance improvements or declines. Audit internal link distribution using the Links report.

Monthly analysis focuses on strategy, growth opportunities, and performance forecasting rather than short-term fluctuations.

How to prioritise issues

Prioritise issues based on impact and urgency.

Fix critical errors first, such as indexing failures, manual actions, or security problems. Next, address high-impression pages with low CTR or ranking gaps.

In 2026, SEO success depends on focusing on changes that influence visibility at scale. Always prioritize fixes that affect high-traffic or revenue-driving pages.

AI-Powered Configuration in Google Search Console

AI-powered configuration in Google Search Console is a feature that allows you to describe the analysis you want in natural language. Instead of manually applying filters and comparisons, you simply type what you need, and the system configures the Performance report automatically. In 2026, as search becomes more AI-driven, faster data interpretation is essential.

This feature automatically applies filters, selects metrics, and sets up comparisons based on your request. It reduces manual steps and eliminates complex report setup errors.

AI-powered configuration helps SEO teams move from data setup to decision-making faster.

What is AI-powered configuration in GSC?

AI-powered configuration in GSC lets you describe your desired analysis in plain language.

For example, you can request a filtered report without clicking through multiple settings. The system understands intent and prepares the report instantly.

This matters in 2026 because SEO decisions must be quick and precise. Automating report setup saves time and improves workflow efficiency.

How AI helps streamline Performance report analysis

AI streamlines analysis by automatically applying filters by query, page, country, device, search appearance, or date range.

It can also set up complex comparisons, such as custom date range comparisons, without manual configuration. Additionally, it selects the most relevant metrics like Clicks, Impressions, Average CTR, and Average Position automatically.

This reduces friction in data analysis and ensures consistent reporting standards across teams.

Example use cases of AI-powered configuration

You can request: “Show me queries on phone searches that contain ‘sports’ in the last 6 months.” The system filters by device, query keyword, and time range instantly.

You can ask: “Compare traffic for pages containing ‘/blog’ this quarter vs same quarter last year.” AI configures the comparison automatically.

Other examples include: “Show Average CTR and Average Position of queries in Spain in the last 28 days,” or “Show clicks for pages that include the word ‘Google’.”

These use cases demonstrate how AI-powered configuration accelerates advanced SEO analysis.

Current limitations

AI-powered configuration currently works only for the Performance report in Search results. It does not apply to Discover or News reports.

This matters because if you rely on Discover traffic or Google News visibility, you must still configure those reports manually. In 2026, multi-surface search visibility is important, so understanding this limitation prevents reporting gaps.

AI may also misinterpret complex or vague requests. Always review applied filters and metrics before drawing conclusions. Additionally, it cannot sort tables or export data automatically, so manual refinement is still required for deeper analysis.

How to use AI-powered configuration effectively

Use natural language queries to save time when setting up filters and comparisons. Be clear and specific in your request to reduce misinterpretation.

After AI configures the report, double-check filters, date ranges, and selected metrics for accuracy. Combine AI setup with traditional filters when you need precise segmentation.

In 2026, the goal is efficiency, not automation for its own sake. Focus on extracting insights and making optimization decisions, rather than spending time on manual configuration.

Common Mistakes to Avoid in Google Search Console

Common mistakes in Google Search Console usually happen when users collect data but fail to act on it correctly. The tool provides powerful insights, but misinterpretation or neglect can lead to lost traffic and missed opportunities. In 2026, with AI-driven ranking systems reacting quickly to technical and engagement signals, small oversights can create significant performance drops.

Many websites lose rankings not because of algorithm penalties, but because issues go unnoticed inside GSC.

Avoiding these common mistakes ensures your SEO decisions are accurate, proactive, and aligned with real search data.

Ignoring indexing errors

Ignoring indexing errors means important pages may never appear in search results.

If pages show errors or exclusions and are not fixed, they cannot rank or generate traffic. In 2026, Google is stricter about indexing quality pages only.

Regularly reviewing and resolving indexing issues ensures your key content remains visible and competitive.

Not filtering queries properly

Not filtering queries properly leads to broad, misleading conclusions.

Looking at overall averages hides keyword-level opportunities. High-impression queries may be buried in the data.

Use filters to isolate specific queries, devices, or countries so you can make targeted SEO improvements instead of general adjustments.

Misreading clicks vs impressions

Misreading clicks and impressions often results in wrong optimization decisions.

High impressions with low clicks usually signal weak CTR, not poor rankings. Low impressions may indicate ranking issues or indexing gaps.

Understanding this difference helps you decide whether to improve titles or strengthen content depth.

Not reviewing coverage report regularly

Not reviewing the coverage report regularly allows technical issues to grow unnoticed.

New errors can appear after site updates, migrations, or plugin changes. In 2026, technical stability directly impacts AI-based ranking systems.

Monthly coverage checks ensure your site remains clean, crawlable, and fully indexed.

What is Google Search Console and how does it work?

Google Search Console is a free web service from Google that lets website owners monitor how their site performs in search, check indexing status, find crawl errors, and optimise visibility in Google Search results. It shows data about impressions, clicks, and issues that may affect a site’s presence on search pages.

When will data start appearing in Google Search Console after setup?

After you verify your site in Google Search Console, data usually starts appearing in the Performance report within about 48 hours. Metrics like clicks and impressions begin populating once Google starts processing your site’s search activity.

What does the Coverage report in Google Search Console show?

The Coverage report shows the indexing status of your pages including pages that are valid, have errors, warnings, or are excluded helping you understand which pages are indexed and why some are not.

How do sitemaps help in Google Search Console?

Submitting an XML sitemap in Google Search Console helps Google discover your pages more efficiently. The Sitemaps section shows when Google last read your sitemap, how many URLs were discovered, and if there were any errors in the file.

Why is the Performance report important in Google Search Console?

The Performance report shows search traffic data like clicks, impressions, average CTR, and average position. It helps you see which queries and pages are driving traffic and where opportunities exist to improve SEO visibility.

What are Core Web Vitals in Google Search Console?

Core Web Vitals are a set of user experience metrics that measure loading performance, interactivity, and visual stability of pages (such as Largest Contentful Paint (LCP), Interaction to Next Paint (INP), and Cumulative Layout Shift (CLS)). The report helps identify pages that need performance improvements.